|

|

|

| [Paper & Supp.] | [Code] | [Data & Models] |

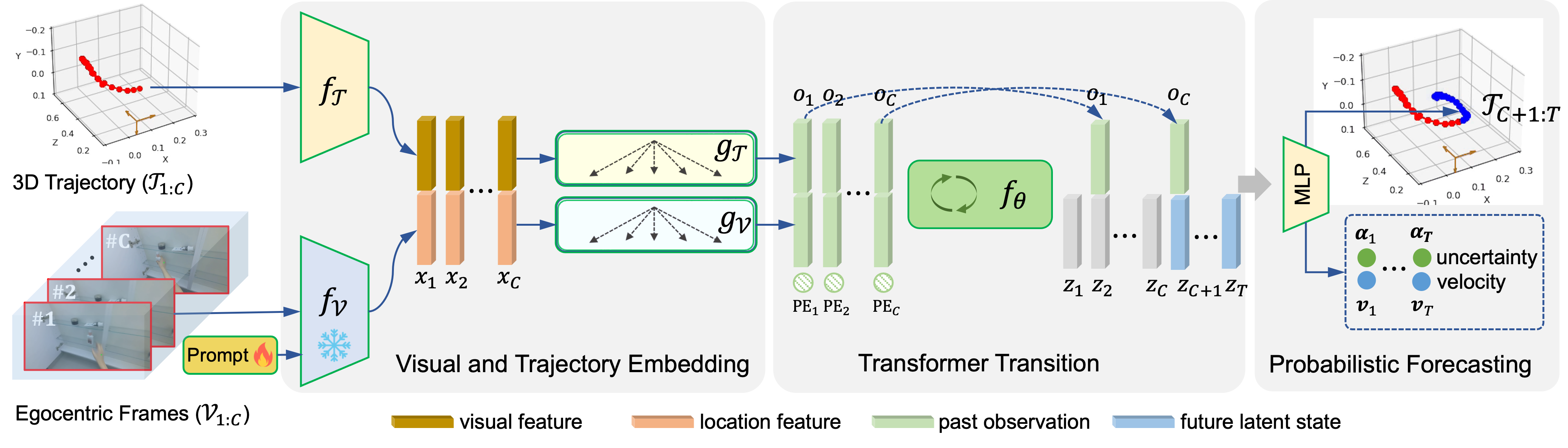

Hand trajectory forecasting from egocentric views is crucial for enabling a prompt understanding of human intentions when interacting with AR/VR systems. However, existing methods handle this problem in a 2D image space which is inadequate for 3D real-world applications. In this paper, we set up an egocentric 3D hand trajectory forecasting task that aims to predict hand trajectories in a 3D space from early observed RGB videos in a first-person view. To fulfill this goal, we propose an uncertainty-aware state space Transformer (USST) that takes the merits of the attention mechanism and aleatoric uncertainty within the framework of the classical state-space model. The model can be further enhanced by the velocity constraint and visual prompt tuning (VPT) on large vision transformers. Moreover, we develop an annotation workflow to collect 3D hand trajectories with high quality. Experimental results on H2O and EgoPAT3D datasets demonstrate the superiority of USST for both 2D and 3D trajectory forecasting.

|

|

|

Microwave (Seen) |

Windowsill AC (Unseen) |

|

|

|

Pantry Self (Seen) |

Stove Top (Unseen) |

|

|

@inproceedings{BaoUSST_ICCV23,

author = "Wentao Bao and Lele Chen and Libing Zeng and Zhong Li and Yi Xu and Junsong Yuan and Yu Kong",

title = "Uncertainty-aware State Space Transformer for Egocentric 3D Hand Trajectory Forecasting",

booktitle = "International Conference on Computer Vision (ICCV)",

year = "2023"

}

|

|

|